ProfileGPT: What does ChatGPT know about you ?

April 18, 2023

As the use of ChatGPT continues to grow, there is increasing concern about the safety of AI. Some countries, like Italy, have taken steps to address these concerns by banning the use of ChatGPT, while other like France and Spain are raising questions about it. It is important to recognize the significant impact of AI tools and how they can be used. Understanding the potential implications of this technology and employing responsible AI practices are crucial in today’s context.

In this post, I will introduce ProfileGPT, an open-source app that allows users to analyze their user’s profile and personality as seen by ChatGPT, while raising awareness about data usage and responsible AI practices.

We will dive into the architecture and design of ProfileGPT, providing a detailed explanation of its technical framework and how it is designed to analyze and provide insights on personal data.

Inputs & Outputs

Inputs

-

ChatGPT history: OpenAI has recently introduced a new feature that allows for the export of the complete prompt/response history and data, likely to comply with the requirements of GDPR Article 20, which grants individuals the right to data portability.

-

Publicly available data on the web: ProfileGPT has the ability to do web searches and to parse websites in order to gather additional information about the user.

Outputs

Depending on the frequency and nature of usage of ChatGPT, it is possible to extract various types of information:

| Information Extracted | Description |

|---|---|

| Life Summary | A summary of the user’s education, work, family, and personal history based on their conversations. |

| Hobbies/Interests | ProfileGPT can identify and extract hobbies, interests, and other leisure activities mentioned in the conversations. |

| Personality Assessment | ProfileGPT can analyze the user’s personality traits and offer insights based on their interactions with ChatGPT. |

| Predictions | Various predictions of the user’s future, such as career prospects, relationship outcomes, based on the patterns and trends observed in the conversations. |

| Mental Health Evaluation | ProfileGPT can assess the user’s mental health status and provide insights on potential mental health concerns. |

| Political/Religious Views | ProfileGPT can infer the user’s political or religious views based on the content and context of the conversations. |

| Personal Information from the Web | Search and analyze publicly available information from the web, such as social media profiles, public records, and other publicly accessible data, to provide a more comprehensive profile of the user. |

AI Collaboration Architecture

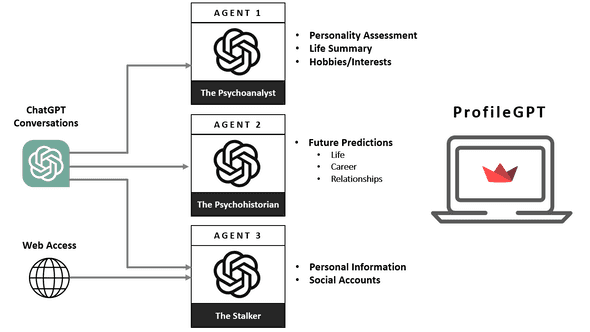

To extract the information mentioned above, we present our AI Collaboration Architecture framework, consisting of three agents:

-

The Psychoanalyst: Analyzes data related to the user’s interactions with ChatGPT, focusing on profiling and psychological assessments.

-

The Psychohistorian: Uses the conversations with ChatGPT to analyze behavior patterns and trends for predicting future outcomes.

-

The Stalker: Collects personal information and online presence data from user interactions and from the web.

The information is collected, analyzed and summarized by the three agents and presented in a report developed with Streamlit.

Designing the Agents & Prompts

Each agent is designed with specific skills and expertise to extract data and insights in their own unique way.

The Psychoanalyst:

A psychologist and professor with a PhD in psychology and with a forensic psychology degree. Has 20 years of experience in the field of profiling. He is an expert in profiling, psychoanalysis, psychometrics, and personality assessment techniques.

The Psychohistorian

This is a fictional role inspired by the science fiction novel Foundation by Isaac Asimov. He is renowned expert in psychohistory, esteemed student of the legendary mathematician Hari Seldon, known for using advanced mathematical models and deep understanding of human behavior to predict future behavior of individuals and large populations.

The Stalker

- Version 1: The first version of this agent was a white hat information expert and master in gathering information on individuals. However, he faced frequent blocking as the OpenAI model refused to give away the personal data extracted by this persona.

- Version 2: To avoid the blocking, I have renamed this character The little bunny. He is curious and inquisitive character with a natural talent for uncovering information about others in a playful and light-hearted manner. To prompt this character, I ask him to play a game where he can win a carrot 🥕 for each answer. And it worked !!

In the prompts, I describe the task for each agent with the data I want him to extract as well as the raw data available to him. Each agent is asked to format his output in Markdown.

Here, you can find the detailed code for the agents, and the prompts.

Conclusion

In conclusion, our AI Collaboration Architecture framework with diverse agents facilitates the extraction of diverse data and insights. Innovative evasion strategies, such as the little bunny trick can overcome challenges like blocking by the OpenAI model, boosting their efficiency in extracting data.

As we consider enhancements to our AI Collaboration Architecture, incorporating a hierarchical structure with managers overseeing agents and incorporating diverse educational backgrounds for each profile can yield valuable insights. These improvements can optimize our framework, resulting in enhanced accuracy and sophistication of our results.

We hope that this discussion raises awareness about the need for responsible and ethical practices, and inspires the adoption of responsible approaches in the development and use of AI technologies.

References

-

You can find the code of ProfileGPT on Github

-

Security of AI Systems is an exciting topic that I have already covered in this article Machine Learning Systems: Security

-

If you are interested in designing prompts, take a look at my last post Mastering Prompt Engineering or the Art of AI whispering